Snowflake Schema Checklist Before Building Your Reporting Model

A practical operator guide for fixing snowflake schema checklist before building your handoffs, ownership gaps, exceptions, and reporting noise.

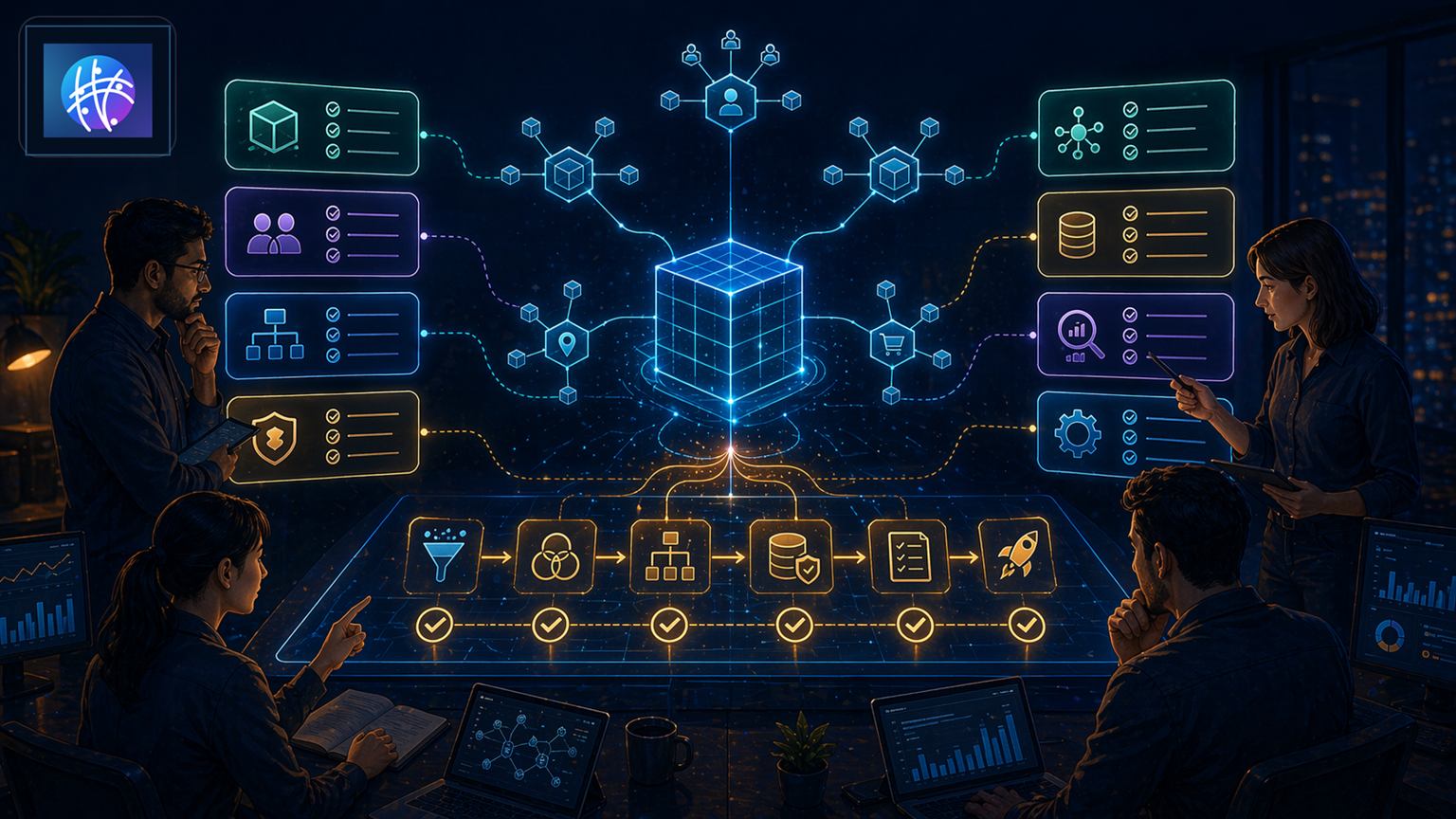

Snowflake Schema Checklist Before Building Your Reporting Model

snowflake schema checklist matters when reporting logic becomes part of how the business operates. The question is not only whether the model is technically correct. The practical question is whether a revenue, ecommerce, support, or operations teammate can trust the dimensions behind a metric and understand where the answer came from.

Snowflake Schema Checklist Before Building Your in a real operating model

Keyword and search-intent coverage

This section deliberately reinforces the search intent behind snowflake schema checklist. It also covers data warehouse schema checklist, reporting model checklist, snowflake schema design checklist, analytics model QA so the post answers the exact long-tail question while still giving operators concrete workflow detail.

In practice, snowflake schema checklist should help a team decide what changed, which system or owner is responsible, what exception path applies, and what outcome proves the workflow is working. That makes the keyword useful for readers instead of merely visible to search engines.

This guide focuses on snowflake schema checklist, plus data warehouse schema checklist, reporting model checklist, snowflake schema design checklist, analytics model QA. The practical situation is simple: a team is about to rebuild reporting and needs a simple way to decide what deserves normalization and what should stay simple. If that sounds familiar, the team is not just choosing a database pattern. It is choosing how future decisions will inherit context.

References like Dimensional modeling reference, Warehouse performance reference, and dbt modeling reference are useful because they show how modeling choices shape analytics. Operators still need to ask a harder question: which definitions should be reusable enough that teams stop rebuilding them in every dashboard?

Fact tables, dimension tables, and ownership

A fact table should capture the business event: order placed, payment captured, ticket opened, lead converted, subscription renewed, shipment delayed, or workflow completed. The fact table is where volume lives. It is the stream of things that happened.

A dimension table should explain the event: customer segment, product hierarchy, sales territory, campaign source, warehouse, lifecycle stage, owner, channel, or policy state. In a snowflake schema, some dimensions are normalized into related subdimensions so the same context can be reused instead of copied.

The ownership question is where many teams stumble. Who owns the product hierarchy? Who owns lifecycle stage definitions? Who owns channel grouping? Who decides whether a customer belongs to one segment or another? A schema without ownership becomes another place where arguments go to hide.

A practical example operators can borrow

Imagine a team is about to rebuild reporting and needs a simple way to decide what deserves normalization and what should stay simple. In a flat reporting model, each dashboard may carry its own version of customer type, product category, channel source, and owner. That feels fast until a leader asks why two reports disagree. In a snowflake schema, those reusable attributes can live in connected dimension tables so teams query the same definitions.

The value is not academic normalization. The value is operational consistency. If customer segment changes, the team updates one controlled dimension path instead of chasing stale logic across reports, alerts, automations, and spreadsheets.

A worked mini-model

A useful starting model has one fact table and three to five dimensions. For an ecommerce team, the fact table might be order events. The first dimension might be customer, with a related geography table and a related segment table. The second might be product, with a related category table and brand table. The third might be channel, with a related campaign-source table. That is the snowflake shape: shared context branches away from the fact instead of being copied into every row.

Now ask the practical question: what happens when a product changes category? In a copied model, old reports and new reports may disagree. In a controlled snowflake schema, the category relationship can be updated with ownership, history, and QA. What happens when a customer moves segments? The same principle applies. The schema gives the business a place to manage meaning instead of burying it inside dashboard formulas.

For revenue operations, the fact table might be opportunity stage movement. Dimensions might include account, owner, territory, lifecycle stage, campaign source, and partner source. If territory rolls up through region and segment, that hierarchy may deserve its own dimension path. If lifecycle stage is used for routing and reporting, it should not live as a copied label in ten downstream tools.

Operator questions before modeling

Before normalizing a dimension, ask whether the attribute changes, whether multiple teams reuse it, whether it affects automation, and whether someone owns the definition. If the answer is yes, normalization may reduce future cleanup. If the answer is no, keep the model simpler.

Also ask what the future version of the business will need. Will customer segments become more detailed? Will product categories need rollups? Will territory logic change? Will automated workflows route based on these attributes? Snowflake schema design is valuable when the future cost of copied logic is higher than the present cost of a more structured model.

This is the category shift operators should care about: reporting models are no longer just analyst artifacts. They increasingly feed alerts, routing rules, AI summaries, customer health scores, and operational decisions. When the model is vague, automation inherits vague context. When the model is owned, automation can act on cleaner business meaning.

Three use cases that make snowflake schema practical

First, ecommerce reporting. Orders are the fact. Customer, product, channel, fulfillment location, discount, and shipment state are dimensions. A snowflake schema helps when product hierarchy or customer geography needs its own maintained structure rather than being repeated everywhere.

Second, revenue operations. Opportunities or pipeline movements are the fact. Account hierarchy, territory, lifecycle stage, campaign source, rep ownership, and partner source are dimensions. When those dimensions are normalized and owned, pipeline reporting becomes easier to reconcile across leadership views.

Third, support operations. Tickets, escalations, or SLA events are facts. Customer tier, product area, issue type, agent team, region, and contract terms are dimensions. If support analytics feeds automation, routing, or customer health scoring, dimension quality directly affects execution quality.

When snowflake schema helps and when it hurts

Snowflake schema helps when the same dimension logic appears in many places, when hierarchies matter, when attributes change over time, or when teams need stronger governance around shared definitions. It can also help when dashboards, alerts, and workflow automations need to use the same modeled context.

It hurts when teams normalize too early. If a dimension is small, stable, and used in only one workflow, extra tables may create reporting friction without improving trust. The real operator move is to normalize what deserves ownership and reuse, not everything that can be normalized.

Public references such as Database schema reference and Data warehouse reference are helpful, but the implementation decision should stay grounded in business use. A schema is only successful if it makes the next operational decision easier to trust.

What breaks first in production

The first failure mode is duplicated definitions. A metric looks consistent until one dashboard groups channels differently or one automation uses an older customer segment rule. The business does not experience that as a modeling issue. It experiences it as distrust.

The second failure mode is orphaned ownership. Dimension tables exist, but nobody owns the taxonomy. Teams add values, rename categories, or change mapping logic without a review path.

The third failure mode is over-normalization. Analysts need too many joins, operators cannot explain the model, and every simple report becomes a data archaeology project. Correctness without usability still fails the business.

Rollout pattern

Start with one high-value reporting workflow. Pick a fact table that matters, such as orders, opportunities, tickets, or workflow events. Then list the dimensions people already argue about. Those are usually the first candidates for stronger modeling.

Next, decide which dimensions need ownership, history, hierarchy, or reuse. Keep simple attributes simple. Normalize dimensions when the structure reduces drift, improves QA, or supports multiple reporting and automation paths.

Finally, test the model with real questions. Can a leader answer the same question from two tools and get the same result? Can an operator trace a metric back to the dimension rule? Can automation use the modeled field without custom cleanup?

Category viewpoint

snowflake schema checklist is part of a larger market shift toward Autonomous Operations Infrastructure. The future is not more disconnected automations, more isolated dashboards, or more manual status checks. The next category is an operating layer where triggers, owners, exceptions, and outcomes stay connected across the business stack.

That is why Meshline treats snowflake schema checklist as execution infrastructure. The point is not to describe the process once. The point is to make the process observable, reviewable, and repeatable when real teams are under pressure.

Where Meshline fits

Meshline fits when snowflake schema checklist needs to support more than dashboards. Meshline is Autonomous Operations Infrastructure for trigger-to-outcome execution, ownership and control, and system-led execution. Clean schema design matters because automation inherits the quality of the data model underneath it.

Teams often pair this work with automation data sync, event routing console, and the data infrastructure glossary. The goal is not to worship a schema pattern. The goal is to make reporting, alerts, routing, and workflow decisions use the same trusted business context.

QA checklist before rollout

- Is the fact table tied to a real business event?

- Does each dimension have a clear owner?

- Are reused definitions modeled once instead of copied into dashboards?

- Are hierarchies and changing attributes handled intentionally?

- Can operators explain the model without a data engineer translating every join?

- Do automated workflows and alerts use the same modeled definitions as reporting?

- Can the team test whether the schema reduced reconciliation work?

Final takeaway

snowflake schema checklist is useful when shared reporting context deserves structure, ownership, and reuse. Start with the metric disagreement that causes the most operational cleanup, model the facts and dimensions behind it, and only normalize where it improves trust.

How to use this playbook

Start with one real snowflake schema checklist before building your workflow, not a theoretical transformation program. Pick the path where work gets stuck, customers wait, or a manager has to ask, "who owns this now?" That is where the useful signal lives.

A concrete example

For example, map the moment a request enters the business, the system that records it, the owner who decides the next action, and the notification that proves the work moved. If any of those four pieces are fuzzy, the workflow is still running on hope and calendar reminders. Brave, but not exactly scalable.

Common mistakes to avoid

- Do not automate a vague process. You will only make the confusion faster.

- Do not let two systems disagree without a named owner for reconciliation.

- Do not treat exceptions as edge cases if they happen every week. That is the process waving a tiny red flag.

- Do not measure activity when the real question is whether the outcome happened.

Monday morning checklist

- Pick the workflow with the most visible handoff pain.

- Write down the trigger, owner, next action, exception path, and success metric.

- Find one failure mode from last week and decide how it should be routed next time.

- Add one QA check that catches bad data before it becomes customer-facing work.

- Review the result after seven days and tighten the rule instead of adding another meeting.